Table of Contents

Multi-task ICL September 2023

Learning shared safety constraints from multi-task demos.

Regardless of the particular task we want them to perform in an environment, there are often shared safety constraints we want our agents to respect. Manually specifying such a constraint can be both time-consuming and error-prone. We show how to learn constraints from expert demonstrations of safe task completion by extending inverse reinforcement learning (IRL) techniques to the space of constraints. Intuitively, we learn constraints that forbid highly rewarding behavior that the expert could have taken but chose not to.

Unfortunately, the constraint learning problem is rather ill-posed and typically leads to overly conservative constraints that forbid all behavior that the expert did not take. We counter this by leveraging diverse demonstrations that naturally occur in multi-task settings to learn a tighter set of constraints. We validate our method with simulation experiments on high-dimensional continuous control tasks.

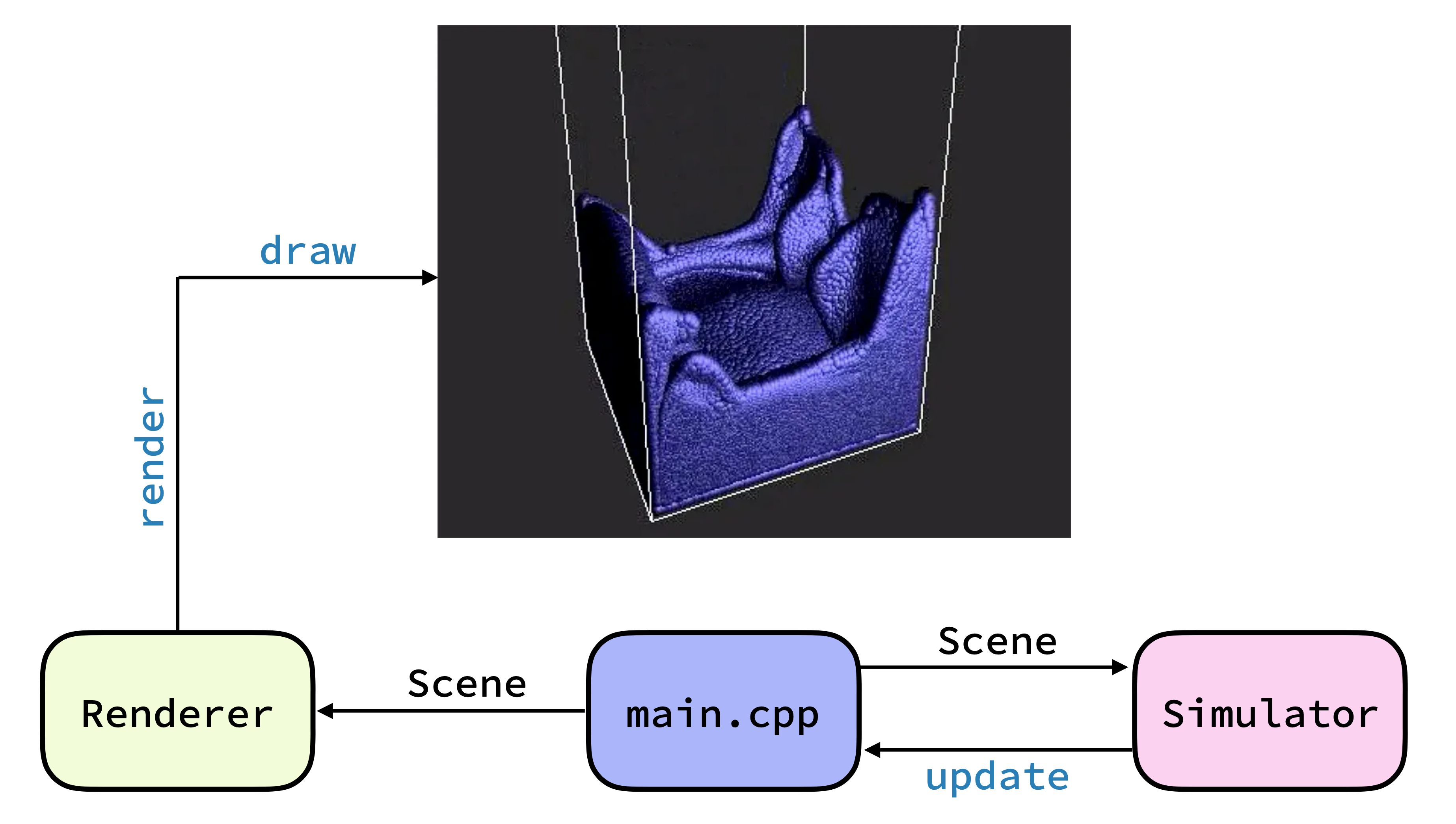

Position Based Fluids December 2022

Parallel fluid simulation in CUDA.

This is a sequential and parallel position-based fluid simulator implemented in C++ and CUDA with a custom renderer in OpenGL. The parallel simulator supports data parallelism, efficient neighbor computation using parallel counting sort, and multi-threaded rendering. It achieves speedups of up to 30x over the sequential simulator and supports real-time simulation and rendering up to 100,000 particles.

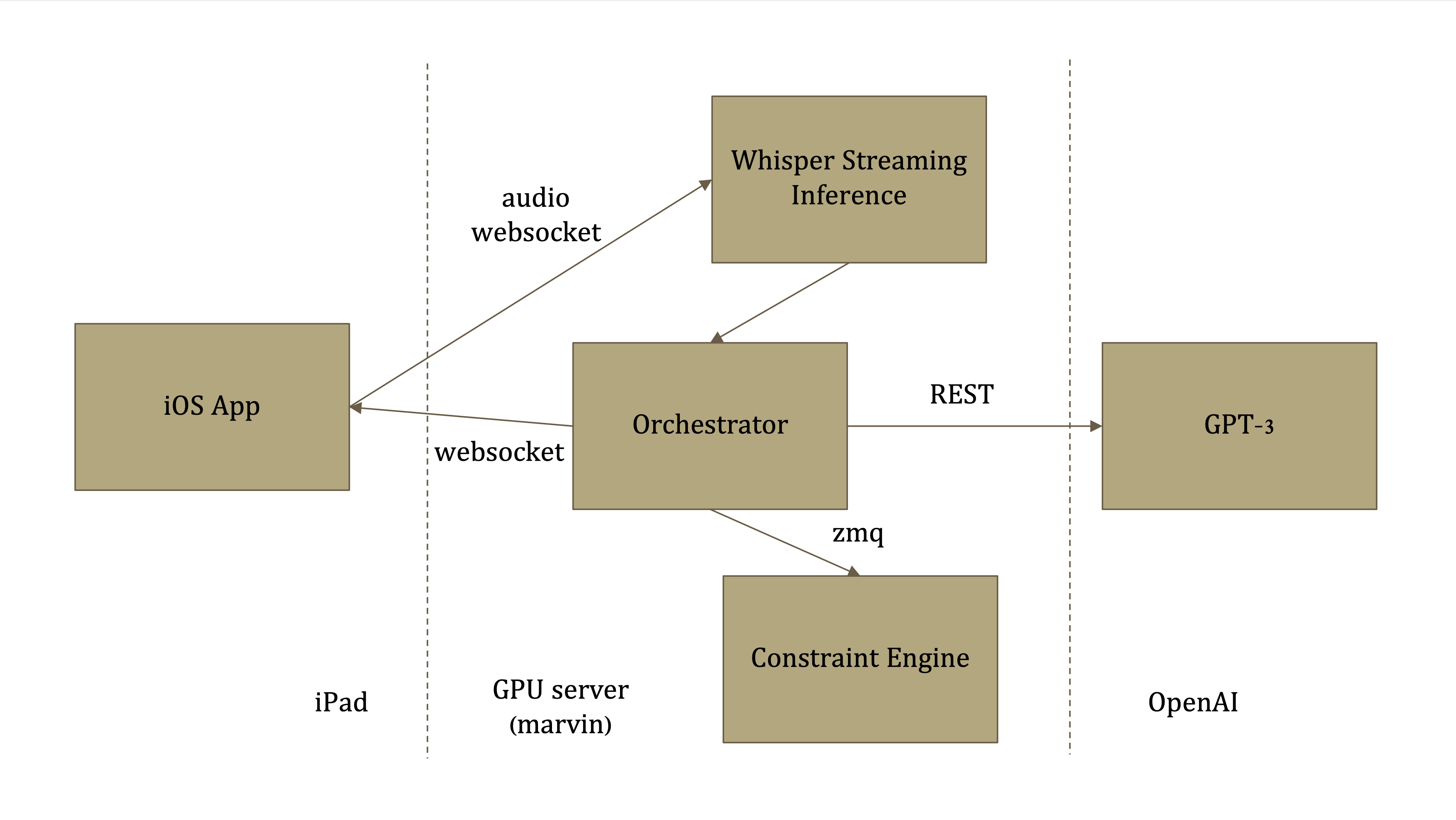

Galen October 2022

Real-time surgical feedback with interactive AI.

Galen is an iOS app and platform which combines state-of-the-art speech to text recognition, few-shot text parsing, and medical data analysis to prevent surgical errors.

Galen aims to keep track of surgical actions and verify that they are safe. To do so, it analyzes surgical audio using Whisper and GPT-3 and certifies that each surgical operation is safe with respect to patient information and an existing database of drug interactions.

This project received Best Distributed Systems Hack and placed 2nd in the iOS Development Challenge and YC Startup Pitch at HackMIT 2022.

Links: Github

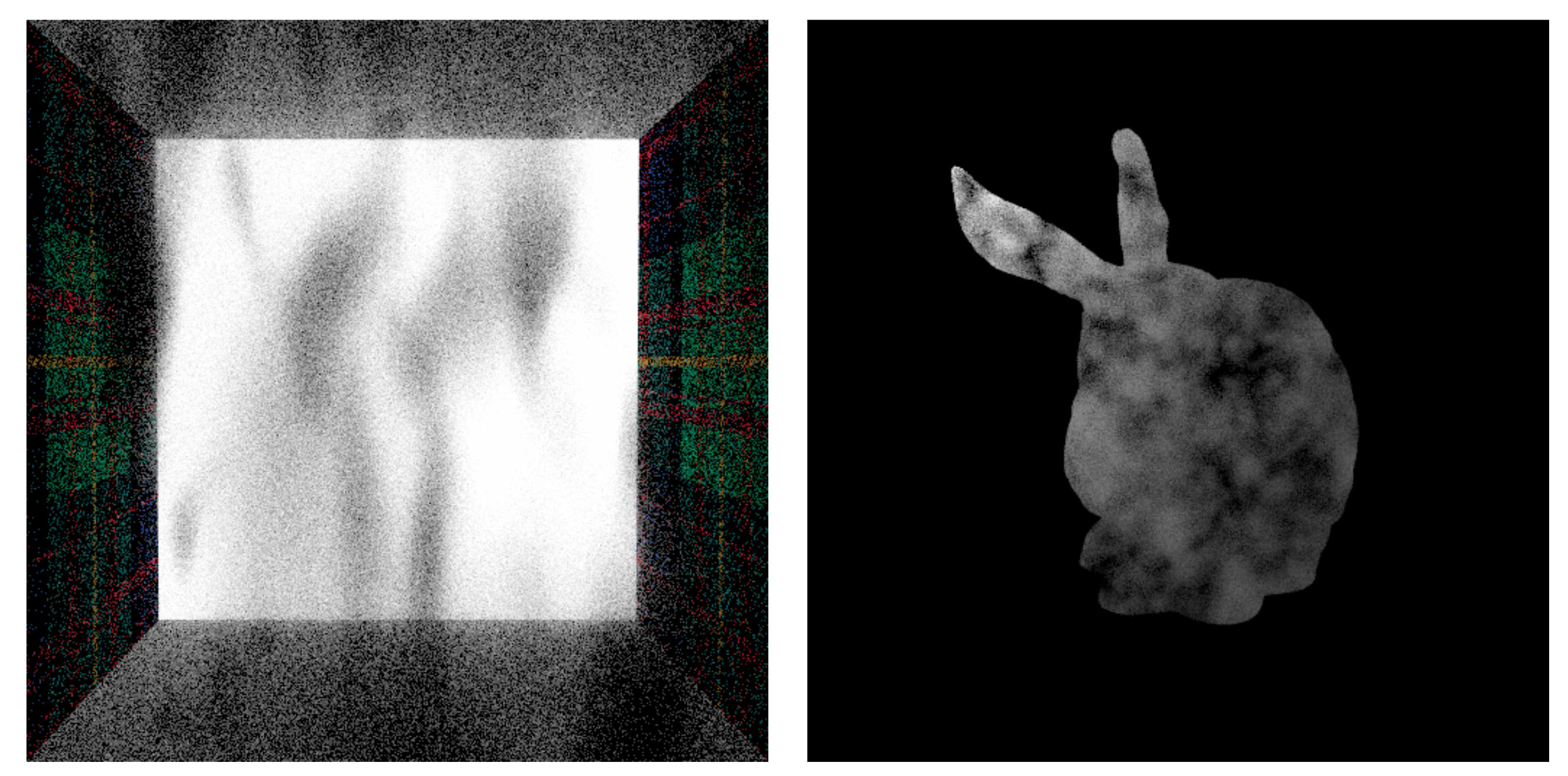

Path Tracer May 2022

Physics-based path tracer in C++.

This is a physics-based path tracer implemented in C++. It features path tracing with multiple importance sampling, quasi-Monte Carlo sampling, texture mapping, various microfacet models and integrators, and volumetric rendering.

Several methods for rendering heterogenous media are implemented including ratio and delta tracking, analog decomposition tracking, spectral tracking, and spectral multiple importance sampling.

Links: Writeup

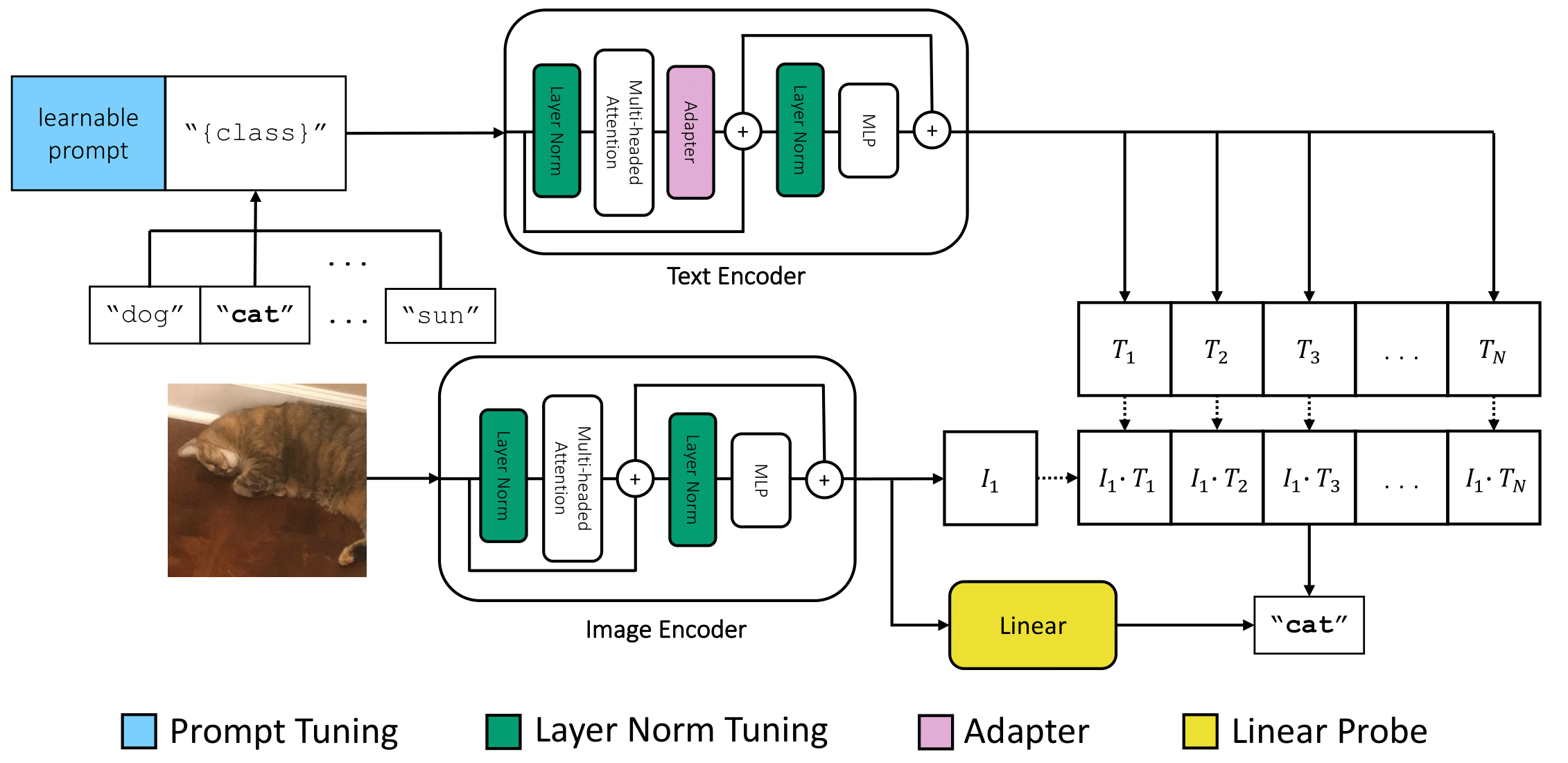

How to Adapt CLIP December 2021

Robust fine-tuning of large-scale pretrained models.

Pre-training large-scale vision and language models (e.g. CLIP) has shown promising results in representation and transfer learning. This research project (advised by Prof. Deepak Pathak, Dr. Igor Mordatch, and Dr. Michael Laskin) investigates the question of how to efficiently adapt these models to out-of-distribution downstream tasks. We analyze several fine-tuning methods for a diverse set of image classification tasks across two spectra — the amount and similarity of the downstream and pretraining data.

Our primary contribution is to show that just tuning LayerNorm paramters is a surprisingly effective baseline across the board. We further demonstrate a simple strategy for combining LayerNorm-tuning with general fine-tuning methods to improve their performance and benchmark them on few-shot adaptation and distribution shift tasks. Finally, we provide an empirical analysis and recommend general recipes for efficient transfer learning of CLIP-like models.

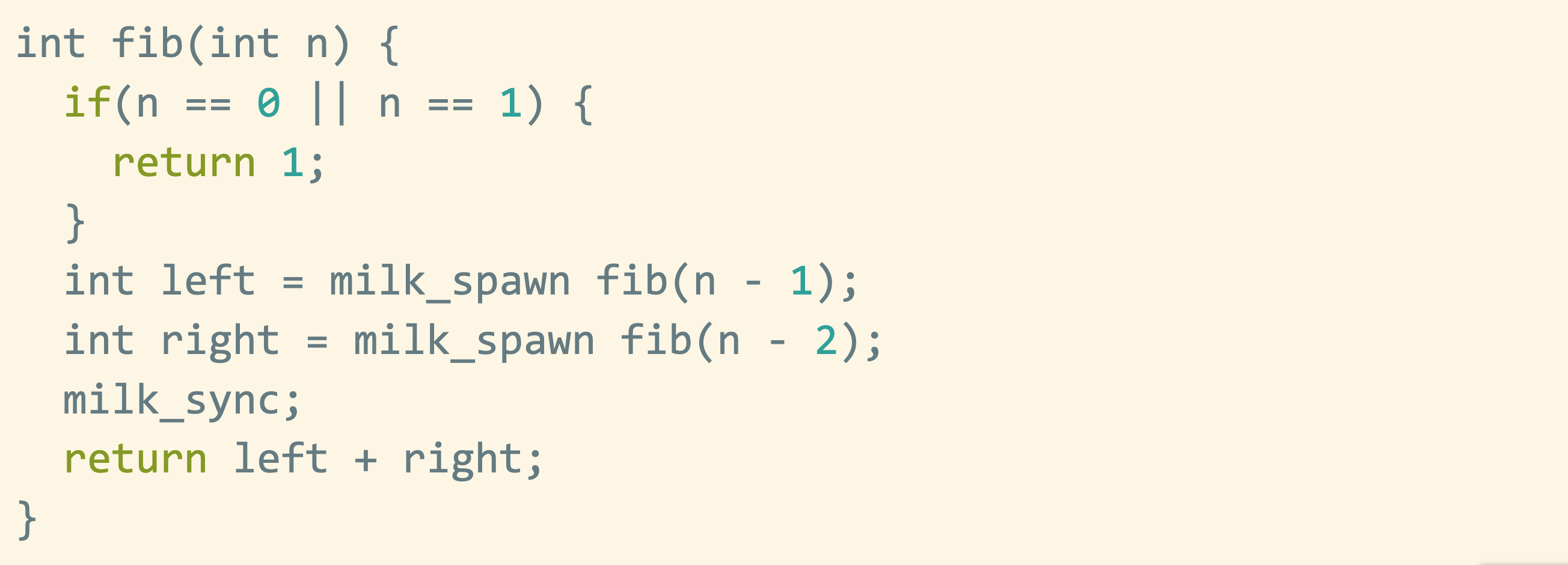

C0 Compiler December 2021

C0 to x86 compiler.

This is a compiler implemented in Rust for C0, a safe subset of C, to x86 assembly. It features lexing and parsing, type-checking, code generation and emission, register allocation and coalescing, and several peephole optimizations.

Additionally, it supports a threading runtime system inspired by Cilk and general task parallelism with spawn/sync calls and automated work stealing.

Links: Writeup

Invert and Factor May 2021

Unsupervised, interpretable image editing.

Invert and Factor is an image editing web interface combining GAN inversion with semantic factorization. Users can upload an image of a face to the interface and modify it by manipulating sliders which correspond to semantic attributes.

Semantic factorization provides semantically meaningful directions in the latent space of a generative model in a fast and unsupervised manner. Combining this with a pretrained inversion model and StyleGan2 generator allows for an uploaded image to be inverted, factorized, manipulated, and re-generated in real-time.

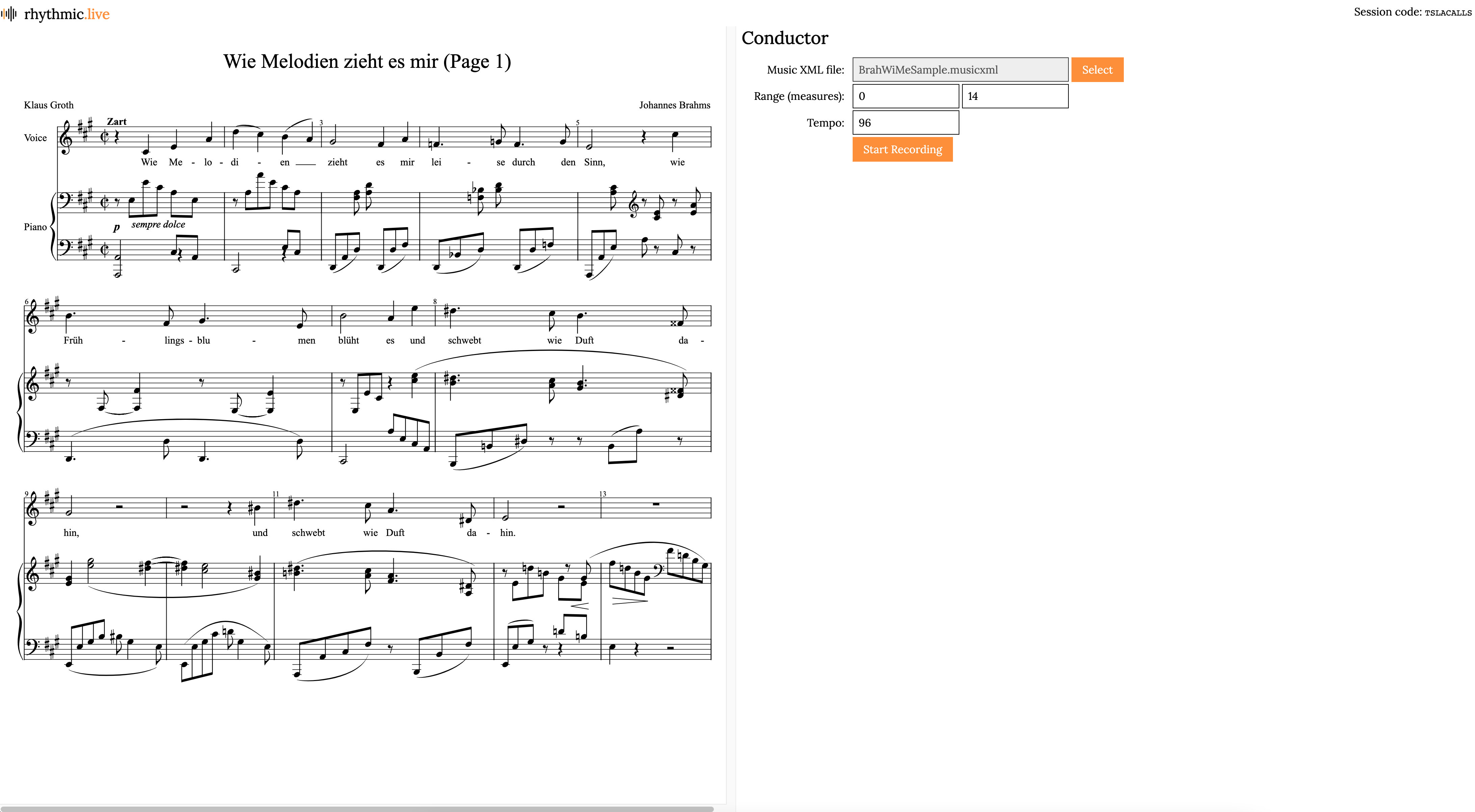

rhythmic.live September 2020

Collaborative music making.

rhythmic.live is a collaborative music making platform providing audio synchronization, interactive and browser-compatible sheet music, and informative analytics.

Musicions can join a recording session from the web app which a conductor manages. When a session begins, separate recording sessions are started and the audio streams are reconstructed in sync and stored. After each recording, the conductor can browse recent recordings, listem to them, and receive algorithmic feedback about tone, timbre, and rhythm.

This project won the NASDAQ Music Challenge at HackMIT 2020.

Links: Github